Profesor Saskia Goes is the receipent of the 2026 Augustus Love Medal of the Geodynamics Division for her outstanding contributions to our understanding of Earth structure and evolution, using integrative research at the confluence of geodynamics, seismology, mineral physics, and geochemistry. In this interview, she talks about her professional journey and shares her thoughts on what the future of ...[Read More]

The Geodynamics Division @ EGU26

The EGU General Assembly is only a few weeks away, and attendees are starting to plan their schedules into what promises to be a week full of exiciting presentations and events. Don’t know where to start planning your week? We got you covered! In today’s blog, we highlight the key events of Geodynamics Division and give you some key tips to get the most out of the week. To access the e ...[Read More]

Interview ECS GD Awardee 2026 – Sia Ghelichkhan

The Division Outstanding Early Career Scientist Awards highlight exceptional scientific contributions made by an Early Career Scientist in the fields of Earth Sciences associated with each division. This year, the prestigious recognition for the Geodynamics Division has been awarded to Dr. Sia Gelichkhan, from the Australian National University. Today we have the pleasure of interviewing him on hi ...[Read More]

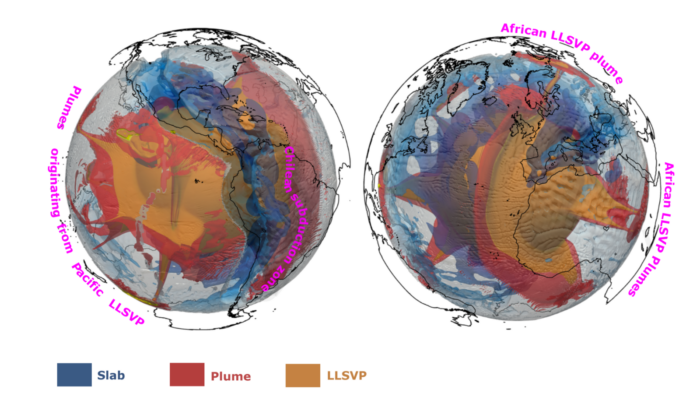

What’s blobbing inside the Earth? – insights from numerical modelling

Seismic waves tell us that something unusual is happening in the lowermost few hundred kilometers of Earth’s mantle. Beneath Africa and the Pacific lie two enormous thermochemical structures known as Large Low-Shear-Velocity Provinces (LLSVPs). These “large blobs” are slower to transmit shear waves, but beyond that, their physical nature remains one of the biggest open questions in deep Earth geod ...[Read More]