Seismicity is undoubtedly an integral part of Geodynamics, since seismic data, from large-scale geophysical monitoring, can provide many valuable insights regarding the state of the Earth’s crust; seismicity, however, is not always natural, it can also be induced. In this week’s blog, we explored the subject of fluid injection-induced seismicity mainly through the lens of hydraulic fracturing (HF; ...[Read More]

At the Mountains of Madness: Lovecraft Applied for Geology (and Failed)

“I am forced into speech because men of science have refused to follow my advice without knowing why. It is altogether against my will that I tell my reasons for opposing this contemplated invasion of the antarctic—with its vast fossil-hunt and its wholesale boring and melting of the ancient ice-cap —and I am the more reluctant because my warning may be in vain.” The opening lines from At the Moun ...[Read More]

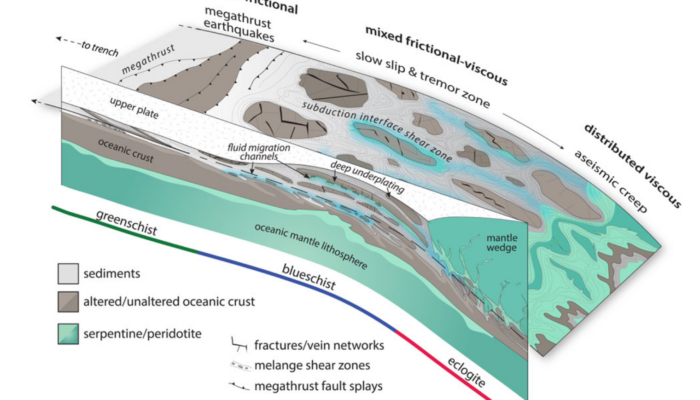

Subduction interfaces are complicated – and that’s their beauty!

The dynamics of subduction zones are strongly influenced by the subduction interface. Understanding its rheology enables geodynamic modellers to better simulate these systems and unravel the fundamental processes that govern them. In today’s blog post, we explore subduction interface rheology and discuss effective approaches for modelling it. What is a subduction interface? I first met a subductio ...[Read More]

Under pressure: measuring stress within the crust

At the geodynamic scale, tectonic forces guide the distribution of stress. Stress in the Earth is not constant, but varies through space. Variations in gravitational energy caused by changes in mass distribution within the Earth, forces acting at plate boundaries, and basal mantle drag all cause stress to vary and act in different directions. Overall, stress plays a key role in tectonics. It allow ...[Read More]