Science is all about discovering new things. But how do we make these discoveries, adding to the ever growing pantheon of knowledge? This week, we sit with one of our editors Antoniette Greta Grima, a Postdoctoral Fellow from the University of Texas at Austin, to understand what it takes to discover a new slab process. Thanks for sitting down with us this week! First things first, which subductio ...[Read More]

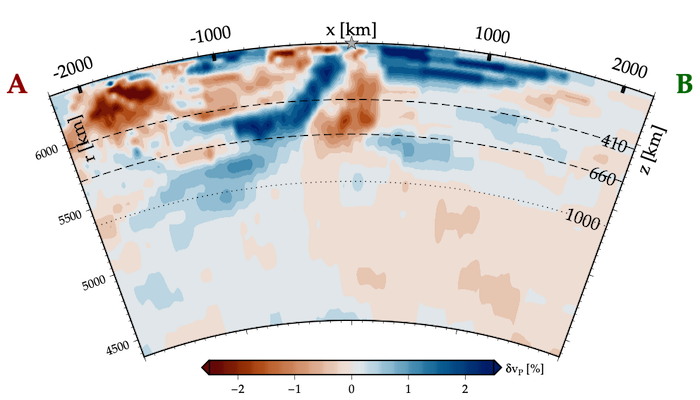

Orphaning: Discovering New Subduction Processes