If you were suddenly told you were living in a different time period, what would your immediate reaction be? Changes in the calendar – even if it’s just terminology – have proven emotive in the past. In 1752, when England shifted from the Julian to Gregorian calendars, and 11 days were cut from 1752 to catch up, there are suggestions that civil unrest ensued.

Once again, the name of the period in which we live has recently changed; the Holocene is now subdivided into three parts, and we’re now living in the Meghalayan age, according to the International Commission on Stratigraphy (ICS). While there weren’t riots in the streets this time, it has proved controversial for some researchers.

The division of time into different epochs and eras is an important part of stratigraphy. While time marches on, ignorant of the names humans give to its divisions, defining periods like the Cretaceous and Jurassic helps scientists compare results from around the world, even where the fossil and sedimentary records differ. It also draws into sharp focus the globally significant differences between each period, often including the devastating mass-extinctions that mark the boundaries of a handful of these periods.

The Holocene has been for at least a century the term favoured to describe the period in which we live, with its beginning marked by the end of the last ice age. The date at which the Holocene began has been more and more closely defined by experts over time, to the now accepted value of approximately 11,650 calendar years before present. The Holocene period encompasses the emergence of human civilisation, and represents a period of relatively warmer, somewhat stable climate in comparison with the prior ice age.

After considerable debate, however, the ICS has decided that the Holocene should be further subdivided; now, the period from 11,650 and 8,200 years before present is the Greenlandian; the Northgrippian stretches from 8,200 to 4,200 years before present, and the Meghalayan defines the time between then and the present. Why did the Holocene need to be divided up as such? If it wasn’t broken, why fix it?

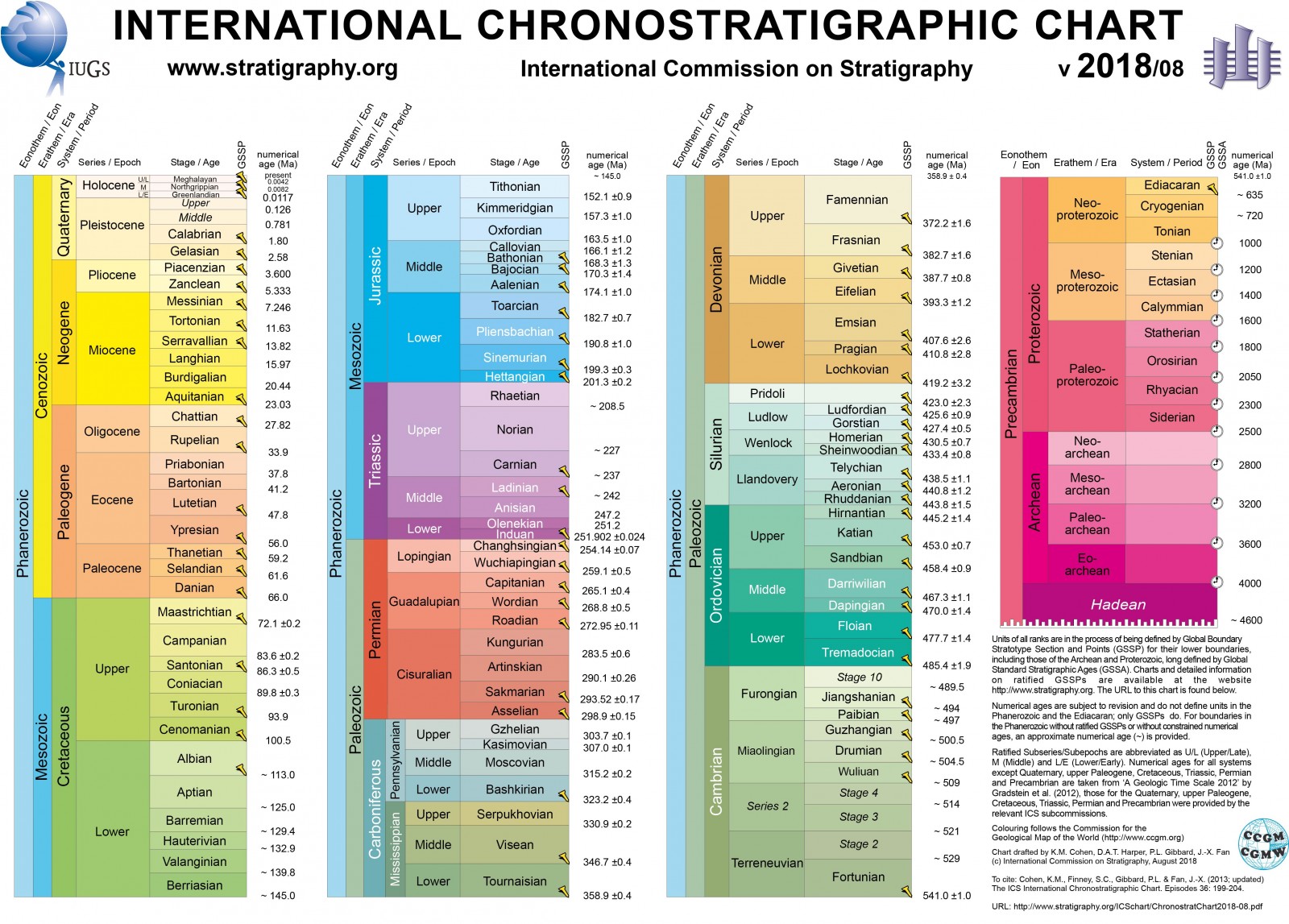

The International Commission on Stratigraphy (ICS) has updated the timeline for the earth’s full geologic history, dividing the Holocene into three distinct periods. What does that mean for the Anthropocene? (Credit: International Commission on Stratigraphy)

The distinctions between an ice age and a warmer period, also known as an interglacial period, are globally significant, and a good place to start when describing how Earth’s climate has changed over the past few hundred thousand years. The swings in global temperature and ice extent are large enough that we often ignore the subtler climate changes that occur within an interglacial or glacial period. However, sediment and fossil records from more recent eras are relatively well preserved (simply because those records have had less chance to be destroyed by other geological processes), and this enables us to explore more recent periods in finer detail. Looking within the Holocene, the transition between the Greenlandian and Northgrippian is marked by a dramatic cooling of the climate, while the Northgrippian – Meghalayan by an abrupt ‘mega-drought’ and cooling that affected the nascent agricultural societies developing at that time.

By dividing the Holocene into these bite-sized chunks, the ICS has drawn attention to these changes in the earth’s geological system and provided a global context to the climatic shifts of the last ten thousand years. It also helps emphasise that climate can and does change on timescales more abrupt that glacial-interglacial periods – something we need to remember when considering the likely effects of anthropogenic climate change.

So far, so scientific. So why have the changes upset some people? Well, there’s an elephant in the stratigraphic room that looms larger now that these changes have been officially ratified. If there’s anything that has marked out the Holocene as fundamentally different from other historical ages, it’s the growth of human society. In particular, we are now at a point in history where the actions of a specific species – humans – can have global effects on the stratigraphic record.

Humans have added large quantities of carbon dioxide to the atmosphere, sown radioactive isotopes across the oceans from nuclear bomb testing, and left waste deposits in environments from the top of Mt Everest to the middle of the Pacific Ocean. Many of these impacts could leave lasting traces in the sedimentary and fossil records, leading to some scientists calling for a new period of time – the Anthropocene. And this may not fit well with the ICS changes.

I spoke with Helmut Weissert, President of the EGU Stratigraphy, Sedimentology and Palaeontology Division about these changes, and he suggested that the new changes devised by the ICS might shift the debate over the Anthropocene, at least in the short term:

“I am quite worried. After the introduction of the new subdivisions I cannot see how the Holocene working group soon will vote for a further subdivision of the Holocene. The Anthropocene working group is confronted with a difficult task. I can envisage that the Anthropocene will be used as an informal term, not officially defined and introduced into the Stratigraphic Chart. I use the term regularly in my writing and in talks, everybody understands the term, I can explain how man is a geological agent. So, we may have to continue using an excellent term which is not yet properly defined, but most people do not care about the definition.”

The Anthropocene is certainly an effective term to draw the attention of the wider public to the impact of society on global geological cycles. But from a stratigraphic perspective, it offers a number of challenges. Where and when, for example, should the beginning of the period be set? Changes in geological periods require specific chemical changes that can be identified globally and an internationally agreed upon reference point – a physical location – that defines the base of the section. There are many potential examples that could be chosen to define the beginning of human interference in the natural system; ice cores showing the uptick in carbon dioxide at the industrial revolution, or ocean sediments attesting to nuclear bomb tests in the 1950s. But the choice of which section to pick is fraught.

Each stratigraphic division needs a reference point that defines the split between the prior time period and the one in question. Here, a ‘golden spike’ defines the base of the Ediacaran period (635 million years ago) in the Flinders Ranges of South Australia. (Credit: Bahudhara via Wikimedia Commons)

Moreover, preservation is a crucial part of stratigraphy; how much of human impact will in fact be preserved, especially after further anthropogenic changes? What if we clean up the environment? What if we dredge the ocean floor for rare metals, and, in doing so, extirpate the signal of the 1950s nuclear bomb tests? What if we melt the ice caps that record the incipient CO2 increases from the industrial revolution? Sure, these changes may be recorded elsewhere, but how can we be sure a reference stratigraphic section will remain intact?

And this brings us to a perhaps more philosophical point: what if the human impact on the natural system we see today is only a fraction of what is to come? Any Anthropocene we define now would be based only upon the impact to date, but future changes may make these seem small in comparison. What would come after the Anthropocene? The question echoes that of 20th century philosophers, asking what comes after Post-Modernism? Perhaps instead of stratigraphy, we should look to written history and recorded data to better contextualise our impact.

Whether we end up defining our current era as the Meghalayan, the Anthropocene, or something else, it seems clear that the debate has drawn increased attention to the short-term climate changes – and in particular those driven by human intervention. A better public appreciation of our role within the natural system is a vital step in limiting damaging future climate change.

by Robert Emberson

Robert Emberson is a Postdoctoral Fellow at NASA Goddard Space Flight Center, and a science writer when possible. He can be contacted either on Twitter (@RobertEmberson) or via his website (www.robertemberson.com)