Artificial Intelligence, and its rapid incursion into the (geo)sciences, was already impossible to ignore at last year’s EGU General Assembly. (you can read my reflections then in this blog post) This year, unsurprisingly, it felt equally present. On Thursday, I attended the Great Debate on “The ethics of using AI in Geosciences: opportunities and risks”, a discussion spanning everything from scientific integrity and transparency to environmental costs, bias, and human responsibility.

Like many conversations around Generative AI, it was sprawling at times, making it difficult to reduce to a single thread. Perhaps that speaks of the fact that “AI ethics” itself is usually discussed as if it were something external to us: a framework to develop, a policy to write, a set of guidelines to package neatly into an institutionally branded document. In reality, some of the most important ethical decisions around AI can be quite personal, and in a way, rather simple.

You could use Generative AI to help refine and correct your grammar for a peer review written in a language that is not your first. You could also ask it to write the review for you. Technically, they both involve AI-generated text. Ethically, they are worlds apart.

That distinction matters because, despite frequent discussions about regulation, detection tools, and institutional guidance, much of scientific integrity still depends on individual choices. Many researchers may not act ethically because they are constantly monitored or because misconduct is easy to detect. They do so because science relies, to a remarkable extent, on personal responsibility: on judgement, restraint, and the willingness to engage critically and honestly with the work you are doing.

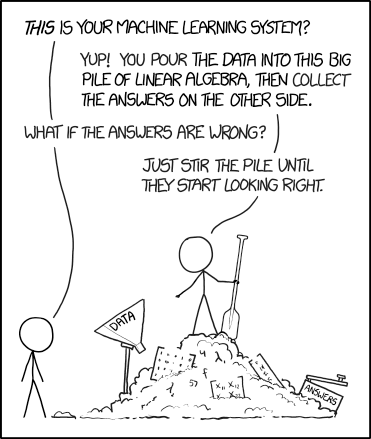

Perhaps that is what makes the current moment feel so important. AI is not only changing what scientists can do, but potentially how scientists think, and how much thinking we choose to outsource to a co-worker that is always helpful, perhaps at times suspiciously so.

At its broadest level, AI ethics is concerned with how these systems are built, trained, deployed, and used (and with the consequences that emerge from those choices). Some concerns relate to the data used to train AI models: where it comes from, what biases it may contain, whether people consented to its use, and whether copyrighted or sensitive material is involved. Others focus on transparency and accountability: whether humans can meaningfully understand how a system reached a conclusion, who is responsible when errors occur, and how these technologies should be regulated in high-impact contexts such as healthcare, law, or education. Finally, there are also broader societal concerns, ranging from environmental costs to misinformation, labour displacement, surveillance, and the possibility of increasingly powerful AI systems being used irresponsibly.

The recent report by the IUGS Commission on Geoethics approaches many of these issues directly, offering recommendations for the ethical use of AI in the geosciences. Going through every aspect of AI ethics in detail would be difficult in a single blog post (and would probably ensure nobody reaches the end of it). So instead, I want to focus on a few ideas from the debate that stayed with me afterwards.

The first one is agency.

The truth is, ultimately, ethical AI use is less about what AI can do, and more about what you choose to delegate to it and about recognising that this remains a choice.

This may sound obvious, but AI has been in conversation where it was described as something happening to scientists rather than something scientists actively decide to use. The language surrounding this is strangely passive: AI is transforming research, changing publishing, and disrupting education. AI isn’t doing any of those things; we are. The responsibility for how we engage with these systems remains ours.

You choose whether to verify the output of a model before including it in your work. You choose whether to rely on AI-generated summaries instead of reading papers yourself. You choose whether AI acts as an assistant to your thinking or begins replacing parts of the thinking process altogether. As Emma Ruttkamp-Bloem (one of the panellists) said:

“once AI is part of your process (in whichever way you choose), the work you need to do after you’ve generated content with AI is not insignificant. The key is, you have to want to do it.”

The debate repeatedly returned to this point, directly and indirectly. Not necessarily through dramatic warnings about artificial intelligence itself (at no point in the debate did I feel like any of the panellists discouraged AI use in science), but through reminders that scientific integrity cannot be outsourced. Responsibility does not disappear simply because a tool becomes more powerful or more convenient. Convenience matters, and AI systems are extraordinarily good at reducing friction: drafting text, summarising information, generating code, organising ideas. But reducing friction can also reduce reflection. The easier it becomes to automate parts of scientific work, the easier it may become to disengage from them intellectually while still remaining accountable for the result. This takes me to the second idea that stayed with me after the debate: the importance of critical thinking not simply as a scientific skill, but as part of scientific ethics itself.

There is often concern that over-reliance on AI could lead to an erosion of skills (I wrote about this in last year’s blog post), but in the context of ethical use of AI, what the debate made me reflect on is the relationship between critical engagement and responsibility.

Science is not only about producing outputs. It is also about understanding how those outputs were reached, recognising uncertainty, identifying limitations, questioning assumptions, and being able to defend conclusions. Many of these processes are cognitively demanding, slow, and occasionally uncomfortable. They require attention, they take effort… if only there was an easier way, right?

AI can absolutely support these processes. It can help researchers work across languages, assist with coding, improve accessibility, and accelerate routine tasks. But there is a meaningful difference between using AI to support reasoning and using it to bypass reasoning altogether. Paraphrasing Emma again, “Know why you are interacting with AI, think about not being manipulated. It’s not paranoia, it’s just acknowledging that AI often cuts reflection time, which is a key part of our process. Research is about learning and reflection. It’s not about doing it as fast as possible.”

Perhaps this is why discussions around AI in science can feel ethically uneasy even when no obvious misconduct has occurred. The concern is not always that researchers are “cheating” in some concrete way. To me, the more concerning thought is that they may gradually stop fully inhabiting the intellectual processes for which they are still held accountable.

As I wrote at the start of this piece, ethical AI use is really difficult to police. Like many other aspects of science, it relies on trust at the individual level and so it is a collective responsibility to make choices simply because they are ethical rather than because of the consequences (for ourselves) if we do not.

Nevertheless, some organisations are now developing guidelines and recommendations for the ethical use of AI, with practical and actionable suggestions for researchers. These are not intended as rigid rules, but as tools to help people develop the knowledge, awareness, and agency needed to make thoughtful choices that protect both science and scientists.

I will conclude by quoting the IUGS report, because I don’t think I can phrase this better than they already have:

“Ethics is not just about rules or consequences; it is situational, emotional, empathetic and relational. It is about moral character. Virtue ethics is a habitual disposition to act rightly – what a good and wise person would do.”

Choose to be a good and wise scientist.