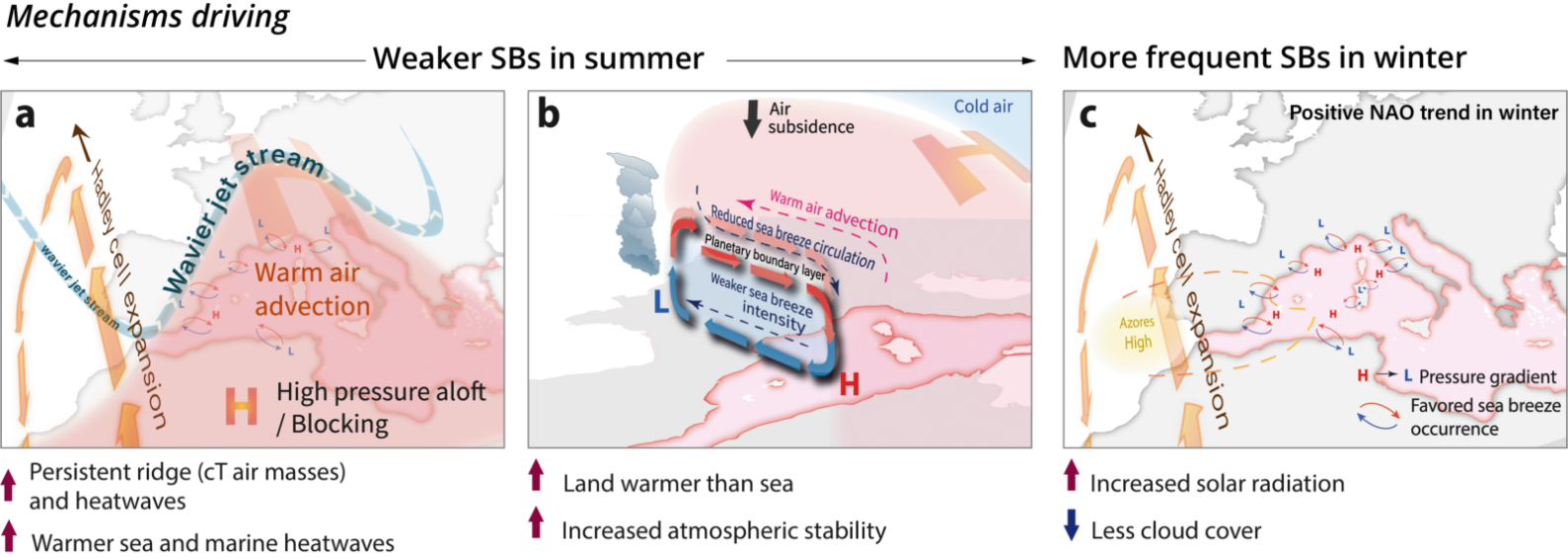

For the 500 million people living along the Mediterranean coast, the sea breeze is an essential component of the regional climate. They are more than a pleasant coastal wind, as they are critical for easing summer heat stress, dispersing pollutants, and triggering convection (the rapid upward movement of warm, moist air), sometimes leading to severe storms, among many others. But the Mediterranean ...[Read More]

Inside the Baltic Sea N2O Hunt: Tracing Sources using Isotopic tools

Nitrous oxide (N2O), commonly known as laughing gas, is one of the most important greenhouse gases, and its rise in the Anthropocene significantly contributes to global warming and depletion of stratospheric ozone. The marine environment, especially coastal and marginal seas, is an important (about 25%) contributor to the global atmospheric source of N2O. Nitrous oxide is primarily produced in mar ...[Read More]

Studying societal climate impacts: why is it hard and what can we do about it

Due to the rapid rise in temperatures, it started raining on the snow and ice-covered roads, prompting the regional public transport operator to suspend all bus services. The rain also resulted in icing on the overhead lines of the main railway line coming into town. Rail traffic was also temporarily suspended. Before the adjacent highway could be salted, several tens of cars were involved in a ch ...[Read More]

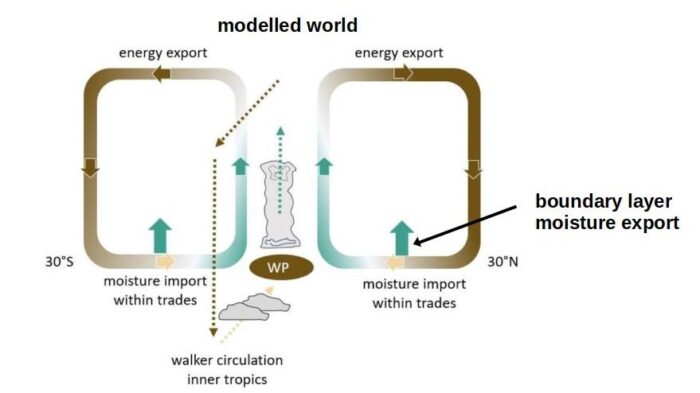

When small-scale turbulence imprints on the global atmospheric circulation: Uncovering the Cause of the Double Intertropical Convergence Zone Bias in ICON

One feature stands out in any map of tropical rainfall from satellites: a narrow band of intense precipitation encircling the globe near the equator. This is the Intertropical Convergence Zone, a key feature of the global atmospheric circulation that imports moisture into the tropics and exports energy to higher latitudes. But for decades, climate models have struggled to simulate this feature cor ...[Read More]